The Transformational Impact of Copilot on Data Security

Microsoft 365 Copilot revolutionizes access to information in enterprise environments by leveraging the power of Microsoft Graph. This artificial intelligence automatically analyzes, synthesizes, and contextualizes data scattered across SharePoint, OneDrive, Teams, and Exchange, based on permissions already granted to users.

However, this capability reveals a critical challenge: Copilot generates no new access permissions, but instantly transforms all content a user already has access to into actionable information. In organizations where data governance has gaps, this reality can lead to involuntary exposure of confidential information.

Warning

Copilot acts as an amplifier of existing permissions. Any data accessible to a user becomes potentially exploitable by AI, even if that data was never consulted before.

Identifying Root Causes of Oversharing

Data oversharing in Microsoft 365 environments rarely results from deliberate malicious actions. Rather, it stems from the accumulation of sharing decisions made over time, without systematic review.

Factors contributing to oversharing include:

- Default sharing settings that are too permissive at the site and library level

- Orphaned SharePoint sites without active owners to supervise access

- Obsolete inherited permissions never audited or updated

- Sensitive content stored in open collaborative spaces

- Lack of classification of data according to sensitivity level

Before Copilot's arrival, this data remained fragmented and difficult to exploit. AI fundamentally changes the game by enabling instant aggregation, summarization, and reformulation of this information, thus creating new exposure vectors.

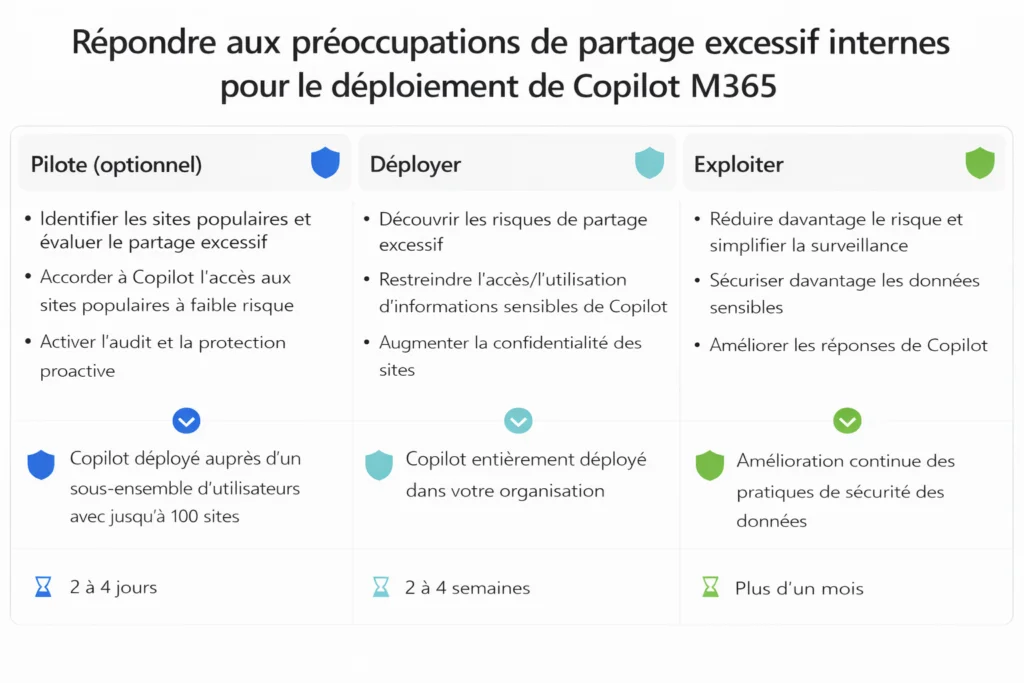

Progressive Three-Phase Governance Methodology

Microsoft advocates a structured approach to deploy Copilot while controlling security risks. This methodology breaks down into three distinct and complementary phases.

Phase 1: Controlled Piloting

The pilot phase forms the foundation of any secure Copilot deployment. It consists of activating AI for a restricted number of users within a carefully defined data perimeter.

Pilot group selection

Perimeter definition

Intensive monitoring

Control evaluation

This phase frequently reveals excessive access to resources mistakenly considered adequately protected, enabling security strategy adjustments before broader deployment.

Phase 2: Secure Scale Deployment

The deployment phase aims to extend Copilot usage across the entire organization while simultaneously strengthening data governance.

Strategic actions in this phase include:

- Review of default sharing settings for all Microsoft 365 services

- Systematic deployment of sensitivity labels across all content

- Proactive access restriction to content classified as critical or confidential

- User training on sharing best practices in a generative AI context

Tip

Prioritize a "Zero Trust" approach: every access must be justified and regularly re-evaluated, particularly in an environment where AI can exploit all granted permissions.

Phase 3: Continuous and Adaptive Governance

Copilot governance cannot be considered a one-time project. It requires permanent monitoring and adjustments, as data and permissions constantly evolve in dynamic environments.

This operational phase enables:

- Proactive monitoring of data exposure over time

- Automated detection of new oversharing cases

- Automated correction of non-compliant configurations

- Regular reporting to management teams on governance status

Without this continuous monitoring, oversharing inevitably reappears, compromising the benefits of previous phases.

Technological Arsenal for Oversharing Control

Microsoft Purview: The Pillar of Data Governance

Microsoft Purview constitutes the central tool for effectively governing Copilot. Its capabilities include:

- Automatic discovery of sensitive data across all Microsoft 365 services

- Continuous assessment of exposure risks based on permissions and content

- Application of DLP (Data Loss Prevention) policies adapted to generative AI scenarios

- Granular control of content usage by Copilot via sensitivity labels

| Feature | Without Purview | With Purview |

|---|---|---|

| Sensitive data identification | Manual and incomplete | Automatic and comprehensive |

| Copilot control | Limited to permissions | Granular by sensitivity label |

| Access audit | Reactive | Proactive with alerts |

| Remediation | Manual | Automated according to policies |

Sensitivity labels ensure protections remain applied even when files are moved, copied, or shared, creating a persistent security layer.

SharePoint Advanced Management: Controlling the Main Source of Oversharing

SharePoint often represents the primary source of oversharing in Microsoft 365 environments. Advanced management features offer:

- Deep analysis of permission status at all hierarchy levels

- Automatic identification of inactive sites or those lacking active owners

- Programmatic reduction of content exposure according to predefined criteria

- Automated lifecycle management of sites and their content

These controls prove essential for limiting the attack surface accessible to Copilot while maintaining team productivity.

Operational Framework for IT Teams

Effective oversharing reduction relies on implementing reproducible and measurable processes.

Technical Best Practices

Periodic permission audits

Label standardization

Adaptive DLP policies

Remediation automation

Key Performance Indicators (KPIs)

To measure governance effectiveness, monitor:

- Percentage of content labeled with appropriate sensitivity labels

- Number of SharePoint sites without active owners (target: 0%)

- Average detection time for new oversharing cases

- Automatic correction rate for non-compliant configurations

Good to Know

Mature Copilot governance should enable detection and automatic correction of 80% of oversharing cases, significantly reducing operational burden on IT teams.

Organizational Dimension of Governance

Technology alone does not guarantee effective Copilot governance. A holistic approach requires:

Organizational Processes

- Clear definition of roles and responsibilities in data management

- Content owner accountability with associated performance metrics

- Continuous user training on excessive sharing risks in an AI context

- Committee governance including IT, security, legal, and business units

Awareness and Training

Users must understand that in a generative AI environment, every granted permission can have amplified consequences. Copilot can reveal patterns and connections in data that were not immediately apparent during initial sharing.

Important

User training must emphasize that Copilot can exploit data shared months or years ago, creating unexpected exposures if permissions have not been regularly reviewed.

Toward Mature and Sustainable Governance

Microsoft 365 Copilot simultaneously acts as a productivity accelerator and a revealer of organizational maturity in data governance. Oversharing predated AI's advent, but Copilot makes it immediately visible and exploitable at an unprecedented scale.

By structuring governance around methodical piloting, secure deployment, and continuous operation, organizations can significantly reduce risks while maximizing the value brought by generative AI.

Robust governance does not constitute an obstacle to technological innovation. It represents the indispensable prerequisite for serene and sustainable adoption of Microsoft 365 Copilot in the modern enterprise.